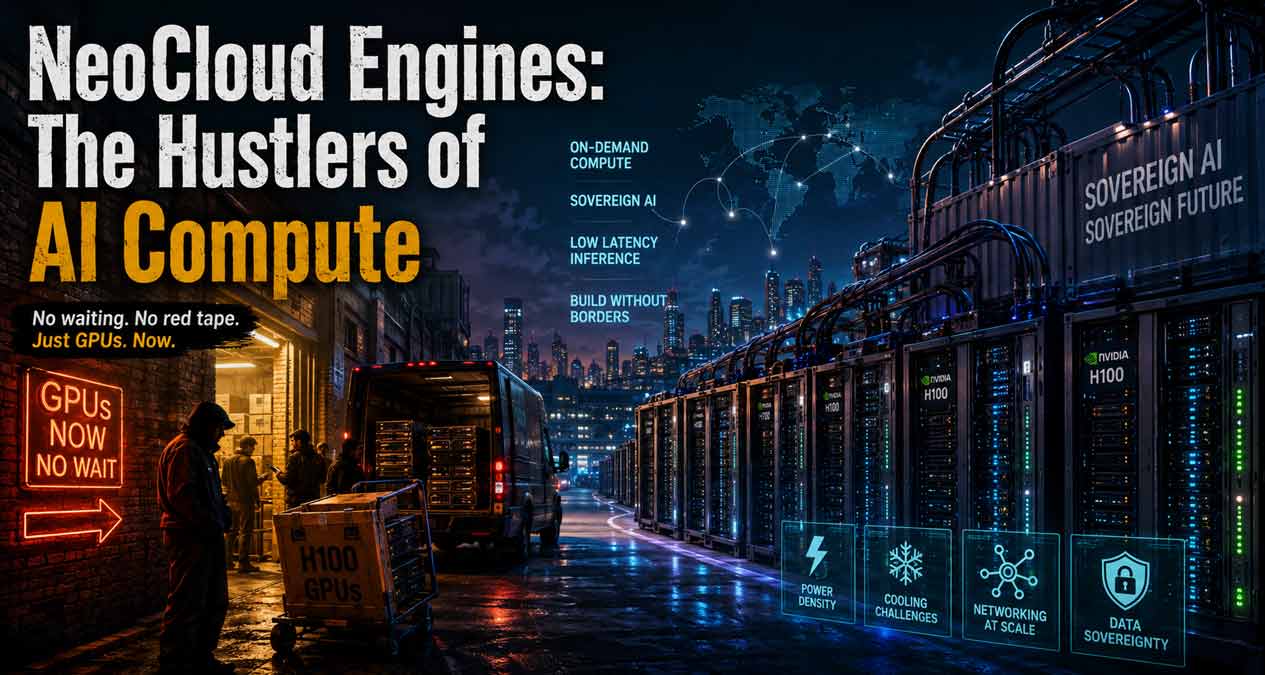

Walk into any AI startup office right now and you’ll hear the same refrain: “Where the hell do we get GPUs?” It’s not a technical question anymore, it’s existential. Models are trained, investors are impatient, customers are waiting—and the bottleneck isn’t talent or ideas, it’s silicon. Out of this chaos, a new breed of infrastructure operator has appeared: NeoCloud Engines.

They’re not polished hyperscalers with glossy keynote decks. They’re scrappy, sometimes chaotic, often brilliant outfits that exist because founders can’t afford to wait six months for AWS to free up capacity. Neoclouds are the back‑alley dealers of compute, and right now, they’re indispensable.

The Mood on the Ground

If you’ve ever tried to spin up a cluster during crunch time, you know the frustration. You refresh dashboards, call account reps, beg for quota increases. Nothing moves. Meanwhile, your burn rate ticks upward and your engineers are stuck throttling inference requests like ration cards.

NeoCloud Engines step into that emotional gap. They say: “We’ve got GPUs. Not next quarter. Now.” For a founder, that’s oxygen. For an investor, it’s survival math. For engineers, it’s the difference between shipping and stalling.

And here’s the operational reality: hyperscalers allocate GPU quota based on long‑term commitments and internal prioritization. If you’re not a Fortune 50 customer, you’re at the back of the line. Neoclouds bypass that system entirely, offering raw access—even if utilization rates hover at 60–70% because orchestration isn’t as polished. For a startup, imperfect utilization is still better than zero.

Why They Exist Now

Timing matters. Training frontier models may grab headlines, but inference is the silent monster eating budgets. Billions of queries, each demanding low latency, each stacking up into a wall of compute. Hyperscalers, with their sprawling bureaucracy, simply can’t flex fast enough.

Neoclouds thrive because they don’t pretend to be infinite. They’re finite, opportunistic, and brutally honest about it. They buy GPUs wherever they can—secondary markets, sovereign contracts, colocation centers—and wire them into clusters that are good enough to keep your product alive. It’s not elegant, but it works.

Behind the scenes, the bottleneck isn’t just GPUs—it’s networking. Many NeoCloud operators struggle with east‑west traffic inside clusters. NVLink and InfiniBand are expensive, hard to source, and critical for scaling inference. Some Neoclouds cut corners with commodity Ethernet, which works fine for smaller models but collapses under multi‑node training. Customers learn quickly: you’re not just buying GPUs, you’re buying the interconnect fabric that makes them usable.

The Economics of Desperation

Let’s talk money. An H100 costs more than a luxury car. Add networking, cooling, and power, and you’re staring at infrastructure bills that make CFOs sweat. Hyperscalers smooth this out with long‑term contracts, but they also lock you into their pace.

Neoclouds flip the psychology. They say: “Pay a premium, get it now.” And founders do. Because in the startup world, time is more expensive than money. That’s the emotional calculus: better to bleed cash today than lose the market tomorrow.

But the economics cut deeper. GPU clusters are energy‑dense, often pushing 40–50 kilowatts per rack. NeoCloud operators face brutal power and cooling constraints, especially in colocation centers not designed for AI loads. That drives up operational costs and forces creative scheduling—running inference workloads at night when grid prices dip, or colocating near renewable sources to hedge against volatility. These aren’t abstract problems; they’re line items that decide whether a NeoCloud survives.

Sovereign AI: Politics Meets Compute

There’s another layer here—national pride. Governments don’t want their AI pipelines running on foreign hyperscalers. They want local control, local data, local sovereignty. NeoCloud Engines, nimble and regionally embedded, become the contractors of choice.

It’s not just compliance. It’s identity. Nations see AI as infrastructure as vital as electricity. Neoclouds, with their willingness to build inside borders, become part of that story. And for founders in those regions, it feels less like renting compute and more like joining a movement.

Operationally, sovereign deployments often mean sacrificing economies of scale. Instead of sprawling data centers, Neoclouds stitch together smaller clusters across multiple sites. That fragmentation complicates orchestration—Kubernetes and Slurm weren’t designed for sovereign silos—and drives up management overhead. Yet the political premium makes it viable: governments will pay for sovereignty even if utilization drops.

Hyperscalers vs. Neoclouds: The Emotional Divide

Hyperscalers are airlines: predictable, regulated, slow to change. Neoclouds are charter jets: expensive, flexible, and thrillingly immediate. One gives you stability, the other gives you adrenaline.

But adrenaline fades. The question is whether Neoclouds can evolve beyond being stopgaps. Can they build trust, brand, and technical depth—or will they remain the hustlers of compute, useful only until hyperscalers catch up?

Here’s where lock‑in bites. Most AI workloads are chained to CUDA, NVIDIA’s proprietary stack. That makes Neoclouds dependent not just on hardware supply but on NVIDIA’s software ecosystem. Until alternative frameworks gain traction, Neoclouds live and die by CUDA compatibility. It’s a reminder that infrastructure isn’t just metal—it’s software gravity.

Sustainability or Just Arbitrage?

Here’s the uncomfortable truth: many Neoclouds are arbitrage plays. They exist because NVIDIA’s supply chain is tight and hyperscalers are sluggish. If that loosens, margins collapse.

But some are playing a longer game. They’re investing in custom networking, sovereign partnerships, and inference‑specific services. They’re trying to become more than middlemen. If they succeed, they’ll carve out niches where hyperscalers can’t compete—low‑latency inference, sovereign clusters, specialized workloads.

Capital expenditure is the silent killer here. Building GPU farms requires upfront cash, often financed at punishing rates. Unlike hyperscalers, Neoclouds don’t have balance sheets that can absorb years of negative margin. They need utilization north of 80% to stay solvent. Miss that mark, and the economics unravel fast.

Wait and Watch for the Next 3–5 Years

The next three to five years in this industry won’t be a straight line. If NVIDIA keeps its grip on the accelerator market, Neoclouds will continue to thrive as middlemen of scarcity. But if alternative chips finally break through—whether AMD’s MI series, Intel’s Gaudi, or some custom ASIC designed purely for inference—the ground shifts. Suddenly, the premium that Neoclouds charge looks less like survival pricing and more like a tax on impatience.

Inference itself is another wild card. Right now it’s expensive, clunky, and power‑hungry. Whoever figures out how to serve models at a fraction of today’s cost will redraw the economics of the entire sector. That breakthrough could come from hardware, but it might just as easily come from clever software or architectural changes. If inference gets cheap, the urgency that fuels Neoclouds evaporates.

And then there’s politics. Sovereign AI isn’t a passing fad—it’s a declaration of independence. Nations want their own compute, their own clusters, their own control. Neoclouds embedded in those ecosystems may outlast their arbitrage peers, not because they’re cheaper, but because they’re local. In geopolitics, proximity matters more than price.

For Now

The cloud was once sold as infinite, a utility you never had to think about. Today, Neoclouds remind us it’s finite, contested, and deeply human—because behind every cluster is a team hustling to wire machines together, and behind every contract is a founder desperate to keep their product alive.

Whether these operators become lasting institutions or fade once the GPU famine ends almost doesn’t matter. Their existence is proof that the cloud has entered a new phase: one defined not by abundance, but by access. And in that shift, the future of AI infrastructure is being written—not in glossy hyperscaler roadmaps, but in the scrappy, imperfect, very human scramble for compute.